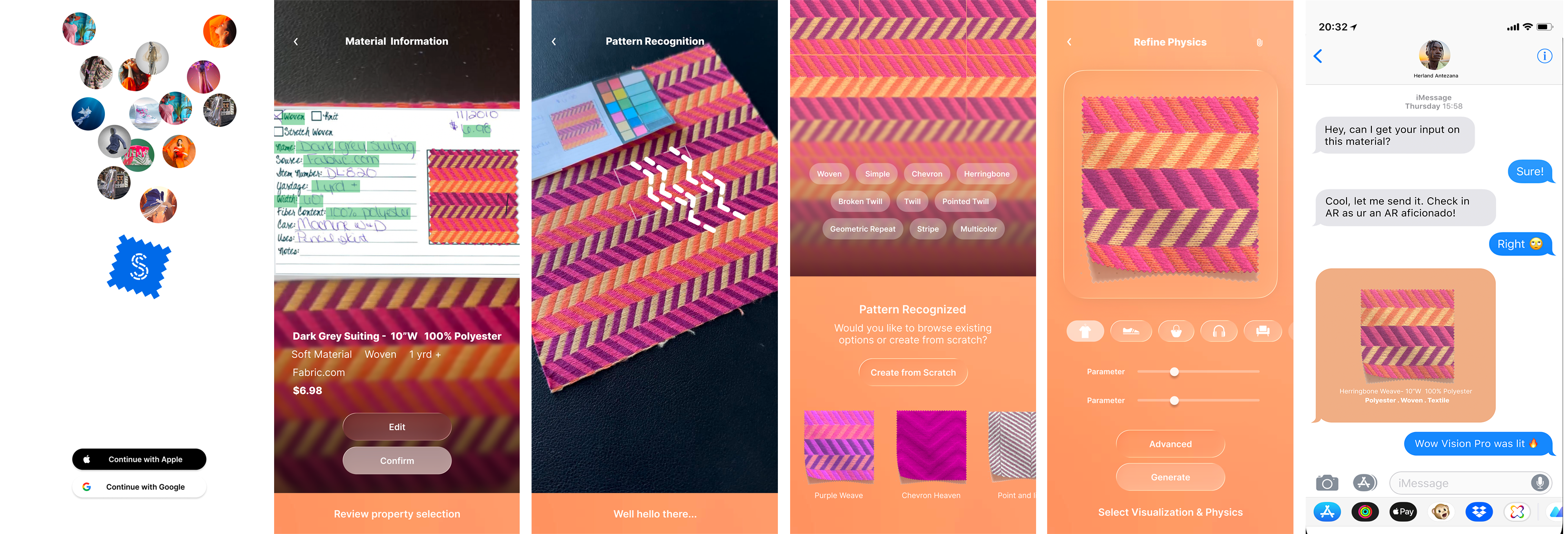

swatchbook capture

Platforms

Project Overview

Overview

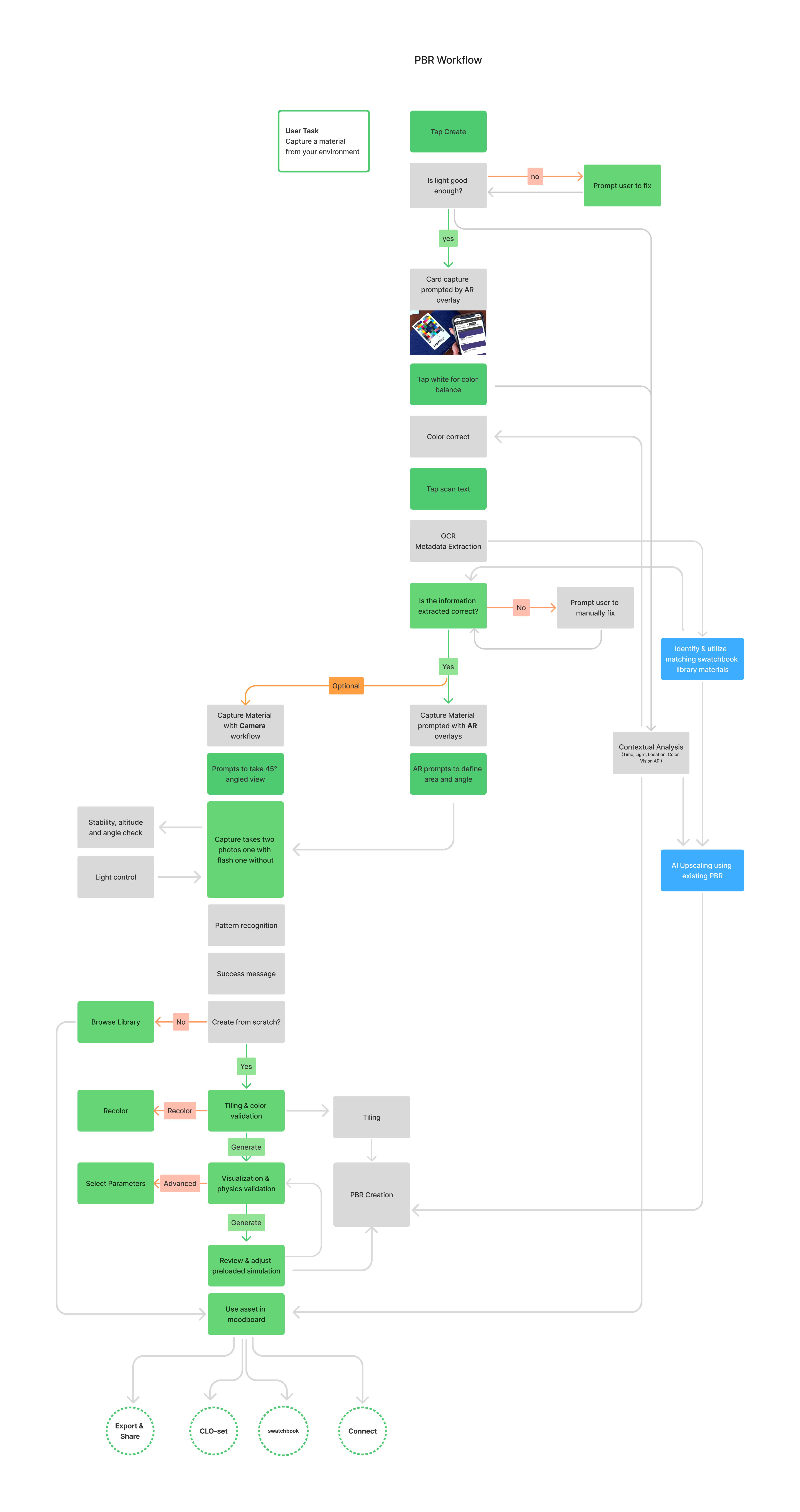

Capture explores how mobile devices can transform physical materials into structured digital assets using AI-assisted capture and classification workflows.

Material digitization traditionally requires specialized scanning equipment, controlled lighting setups, and extensive manual tagging before materials can be used in digital design tools. These workflows are slow, expensive, and difficult to scale across global supply chains.

This project investigates how smartphone sensors, computer vision, and machine learning could streamline this process by guiding users through material capture, extracting metadata automatically, and generating production-ready digital materials.

The exploration focused on designing a reliable capture workflow that combines sensor guidance, AI-assisted tagging, and material analysis while keeping the experience intuitive for designers and material librarians.

Because the project is still under active exploration and involves proprietary technology, only selected elements are shown here.

Execution

AI-Assisted Material Intelligence

A key goal of the project was reducing the manual effort required to create and categorize digital materials.

The system integrates machine learning models developed by the data science team to analyze captured material images and suggest metadata based on a standardized material taxonomy developed by the swatchbook team in collaboration with industry partners and clients.

These models cluster visually similar materials and generate suggested tags derived from existing library data.

Within the capture workflow, AI assists users by:

• suggesting metadata tags based on visual material characteristics

• identifying visually similar materials already present in the library

• helping initialize parameters for texture reconstruction and material simulation

• improving consistency across the material database

Rather than replacing user control, the system was designed to present AI suggestions that users can quickly confirm or adjust before finalizing the asset.

More info can be shared in interviews.